An author for IEEE asked me last week, for an article he’s writing, to write a high level introduction to the World Wide Web Consortium, and what its day-to-day work looks like.

Most of the time when we get asked, we pull from boilerplate descriptions, and/or from the website, and send a copy-paste and links. It takes less than a minute. But every now and then, I write something from scratch. It brings me right back to why I am in awe of what the web community does at the Consortium, and why I am so proud and grateful to be a small part of it.

Then that particular write-up becomes my favourite until the next time I’m in the mood to write another version. Here’s my current best high level introduction to the World Wide Web Consortium, and what its day-to-day work looks like, which I have adorned with home-made illustrations I showed during a conference talk a few years ago.

World Wide What Consortium?

The World Wide Web Consortium was created in 1994 by Tim Berners-Lee, a few years after he had invented the Word Wide Web. He did so in order for the interests of the Web to be in the hands of the community.

“If I had turned the Web into a product, it would have been in people’s interest to create an incompatible version of it.”

Tim Berners-Lee, inventor of the Web

So for almost 28 years, W3C has been developing standards and guidelines to help everyone build a web that is based on crucial and inclusive values: accessibility, internationalization, privacy and security, and the principle of interoperability. Pretty neat, huh? Pretty broad too!

From the start W3C has been an international community where member organizations, a full-time staff, and the public work together in the open.

The sausage

In the web standards folklore, the product –web standards– are called “the sausage” with tongue in cheek. (That’s one of the reasons behind having made black aprons with a white embroidered W3C icon on the front, as a gift to our Members and group participants when a big meeting took place in Lyon, the capital of French cuisine.)

Since 1994, W3C Members have produced 454 standards. The most well-known are HTML and CSS because they have been so core to the web for so long, but in recent years, in particular since the Covid-19 pandemic, we’ve heard a lot about WebRTC which turns terminals and devices into communication tools by enabling real-time audio/video, and other well-known standards include XML which powers vast data systems on the web, or WebAuthn which significantly improves security while interacting with sites, or Web Content Authoring Guidelines which puts web accessibility to the fore and is critical to make the web available to people of all disabilities and all abilities.

The sausage factory

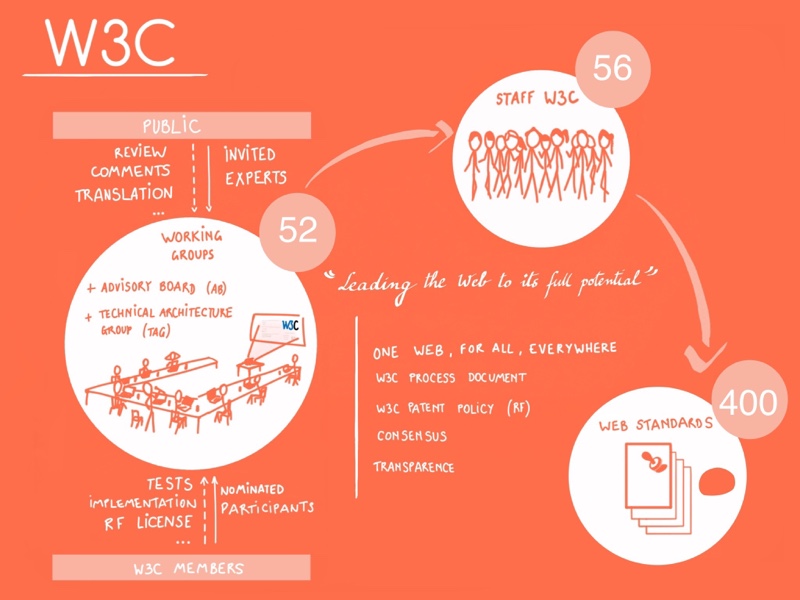

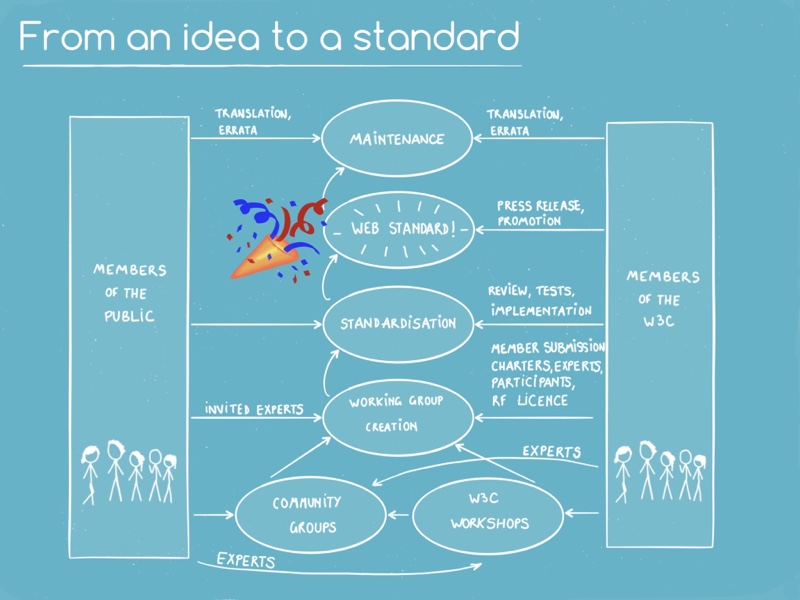

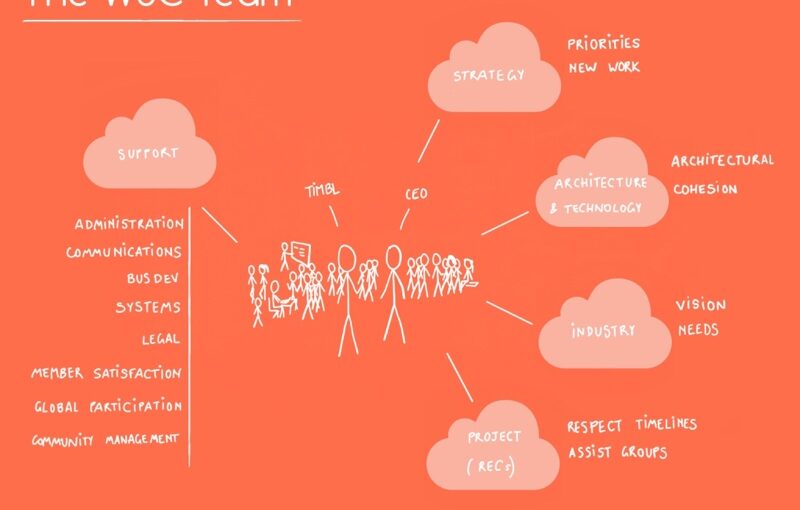

The day to day work we do is really of setting the stages to bring various groups together in parallel to progress on nearly 400 specifications (at the moment), developed in over 50 different groups.

There are 2,000 participants from W3C Members in those groups, and over 13,000 participants in the public groups that anyone can create and join and where typically specifications are socialized and incubated.

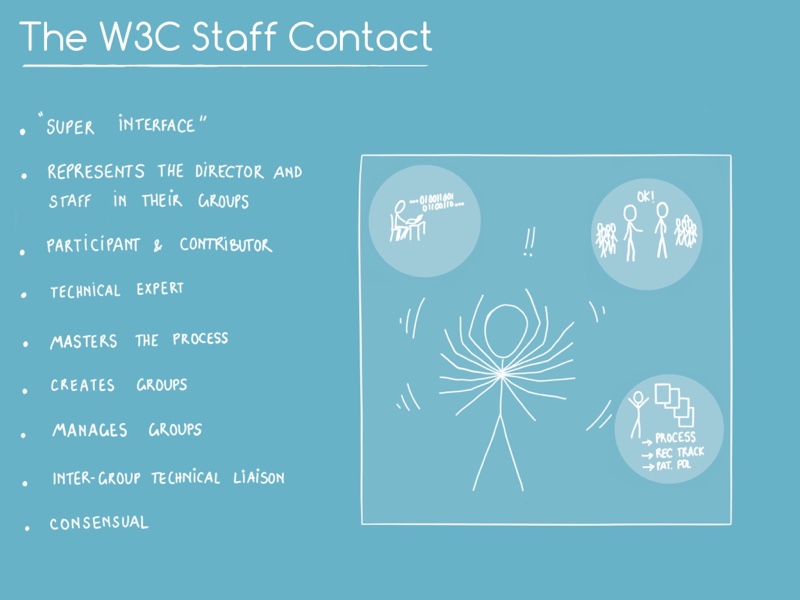

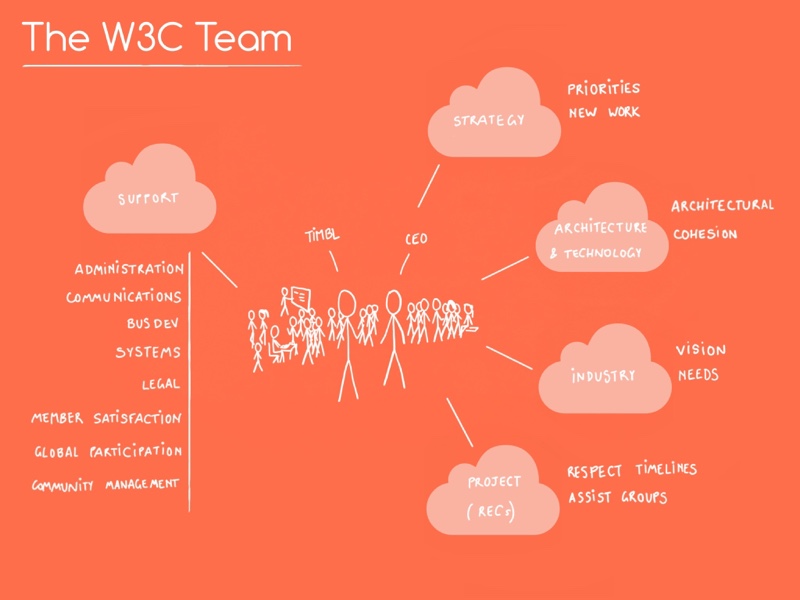

There are about 50 persons in the W3C staff, a fourth of which dedicate time as helpers to advise on the work, technologies, and to ensure easy “travel” on the Recommendation track, for groups which advance the web specifications following the W3C process (the steps through which specs must progress.)

The rest of the staff operate at the level of strategy setting and tracking for technical work, soundness of technical integrity of the global work, meeting the particular needs of industries which rely on the web or leverage it, integrity of the work with regard to the values that drive us: accessibility, internationalization, privacy and security; and finally, recruiting members, doing marketing and communications (that’s where I fit!), running events for the work groups to meet, and general administrative support.

Why does it work?

Several of the unique strengths of W3C are our proven process which is optimized to seek consensus and aim for quality work; and our ground-breaking Patent Policy whose royalty-free commitments boosts broad adoption: W3C standards may be used by any corporation, anyone, at no cost: if they were not free, developers would ignore them.

There are other strengths but in the interest of time, I’ll stop at the top two. There are countless stories and many other facets, but that would be for another time.

Sorry, it turned out to be a bit long because it’s hard to do a quick intro; there is so much work. If you’re still with me (hi!), did you learn anything from this post?

![xkcd comic 1831: Our field has been struggling with this problem for years. [one person grabs a computer and exclaims:] 'struggle no more: I'm here to solve it with algorithms!' [person at computer while others watch] last vignette, 6 months later: 'Wow, this problem is really hard,' says the person at the computer. 'You don't say.' answers the other person.](https://blog.koalie.net/wp-content/uploads/2024/01/here_to_help.png)